It is critical to only do this step after the model with frozen layers has been It could also potentially lead to quick overfitting - keep that in mind. This is an optional last step that can potentially give you incremental improvements. The base model and retrain the whole model end-to-end with a very low learning rate. Once your model has converged on the new data, you can try to unfreeze all or part of fit ( new_dataset, epochs = 20, callbacks =. Binar圜rossentropy ( from_logits = True ), metrics = ) model. Here's what the first workflow looks like in Keras:įirst, instantiate a base model with pre-trained weights.

Such scenarios data augmentation is very important. Your new dataset has too little data to train a full-scale model from scratch, and in Transfer learning is typically used for tasks when Modify the input data of your new model during training, which is required when doingĭata augmentation, for instance. So it's a lot faster & cheaper.Īn issue with that second workflow, though, is that it doesn't allow you to dynamically Your data, rather than once per epoch of training.

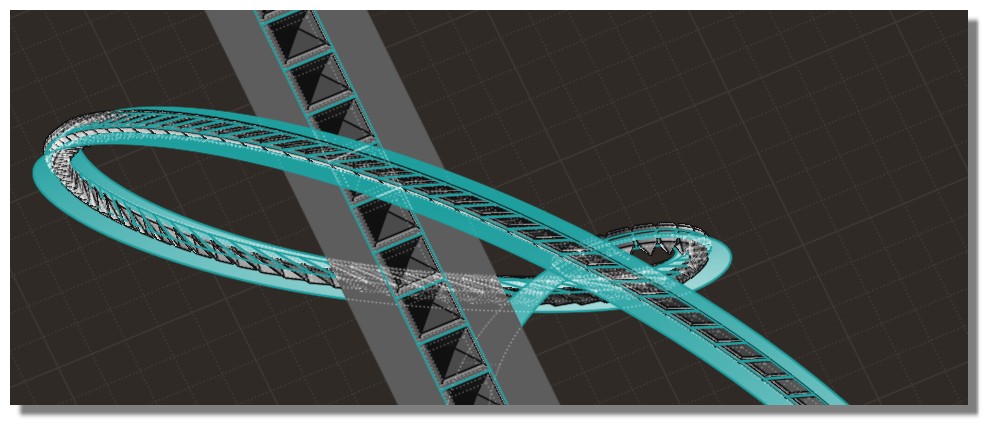

I used MOI3D to reduce the file and convert it to OBJ but with the standard options I lose the folders and the hierarchy of the original file (file is a mess, I can’t use it because there are too many elements that don’t belong to a folder, see the image). I import the file directly into Blender either in STL or STEP (with the addon) which would give me the file with all the details and respecting the hierarchy of the original file, but at the same time would be a massive size (1Gb at least) thus being too much for me to actually work with it. The problem is that the file is a STEP file and as far as I know I only have two options. I’m working on an animation of a mechanical keyboard and I’m having trouble to have a file that’s not huge in size but doesn’t loose quality.